Quick Answer: The rag vs llm comparison is not really a competition between two alternatives across the architecture decision. RAG (Retrieval-Augmented Generation) is an architectural pattern that is using LLMs (Large Language Models) plus retrieved external context to generate better responses. Pure LLM applications are relying only on training data, while RAG applications are retrieving relevant documents from a knowledge base and passing them to the LLM at query time. Use pure LLM for general reasoning tasks across creative workflows, then use RAG when you are needing accurate answers about specific documentation, current information or domain-specific knowledge.

Most discussions of rag vs llm are missing the point that the two are not really alternatives competing for the same role. RAG is a technique built on top of LLMs to make them work with information they were not trained on. Founders evaluating AI architecture, developers choosing between approaches and technical buyers comparing AI product capabilities are all running into this question across 2026. By the end of this guide, the real relationship between RAG and LLM, when to choose each approach and how to combine them effectively in production will be clear, let's take a look.

What Is RAG vs LLM? Understanding the Core Difference

What is rag vs llm properly framed is not a head-to-head comparison, it is actually a question about architectural patterns. An LLM, or Large Language Model, is a foundation model trained on massive text corpora that is generating outputs based on patterns learned during training. Examples are including GPT-4o, Claude 3.5 Sonnet, Gemini 1.5 Pro and Llama 3 across the frontier model category. LLMs are answering questions using only what they learned during training, and their knowledge cutoff is fixed across the lifetime of the model. They are hallucinating when asked about specific information they do not have stored in their parameters.

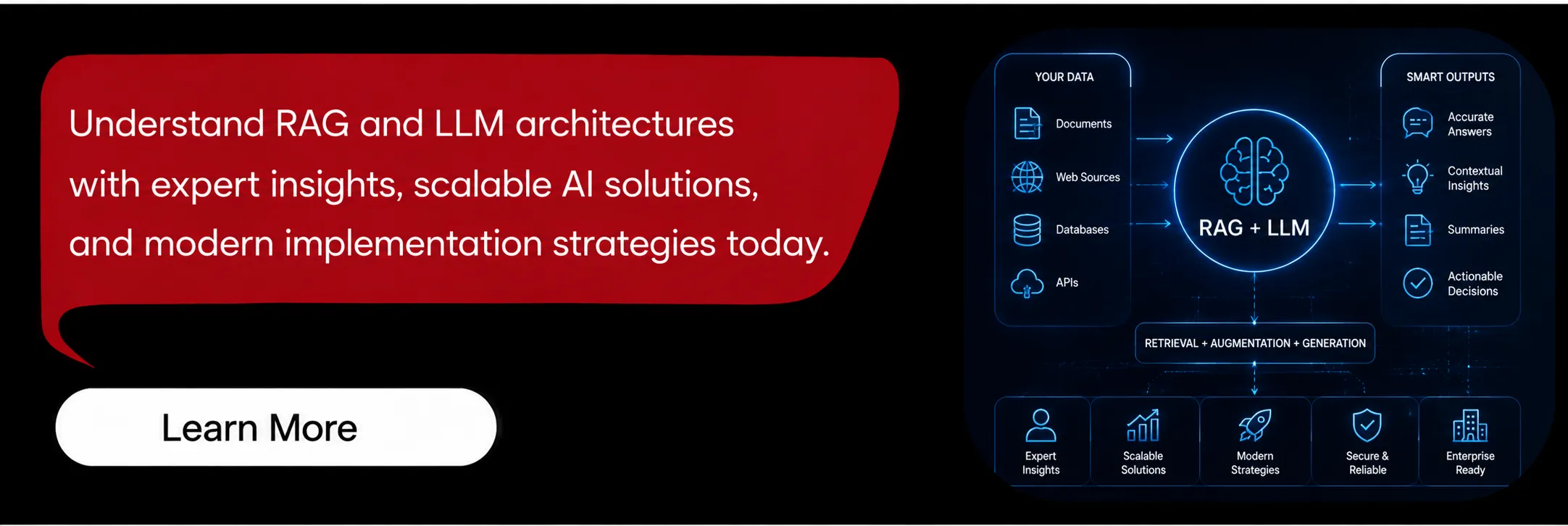

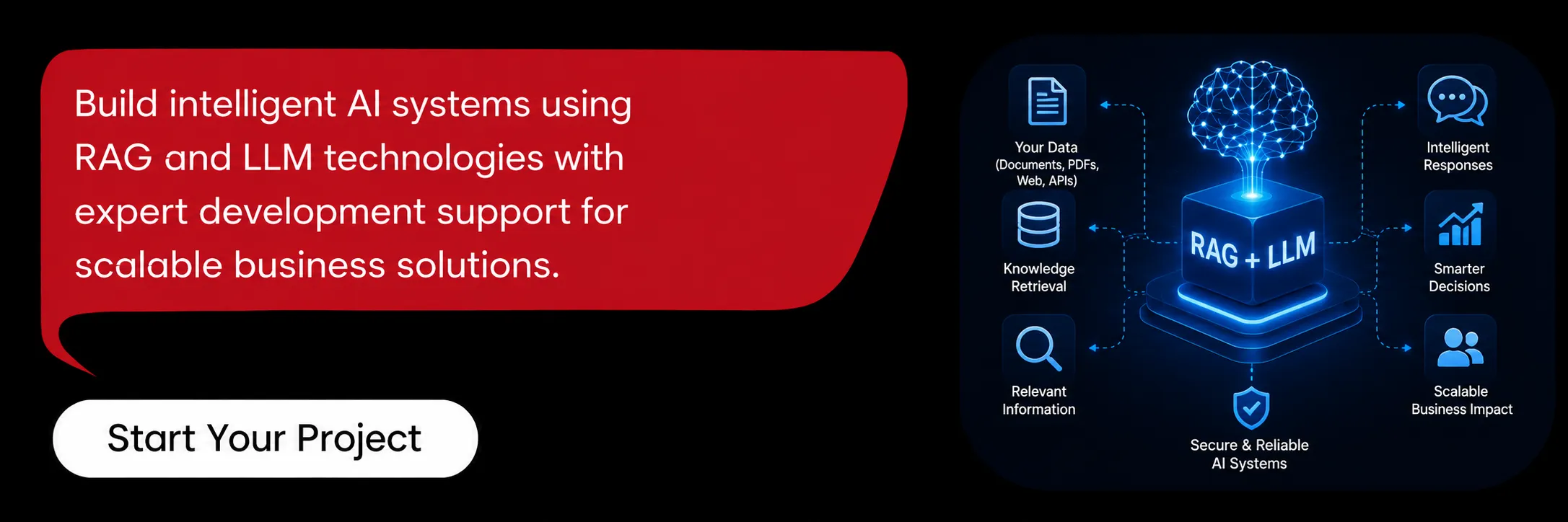

RAG, or Retrieval-Augmented Generation, is an architectural pattern that is solving both limitations of pure LLM deployments. The rag model vs llm distinction is mattering because RAG is not actually a separate model, it is a workflow that is retrieving relevant documents from a knowledge base typically stored in a vector database. Then the workflow is passing those documents to an LLM as context for response generation. The LLM is generating a response grounded in the retrieved documents rather than only its training data. RAG is combining the reasoning power of LLMs with the freshness and specificity of external knowledge sources.

RAG vs LLM Side-by-Side Comparison

Most teams searching for llm vs rag are wanting a side-by-side comparison of capabilities, costs and trade-offs across architectures. The table below is covering the dimensions that are actually mattering when deciding which architecture to use across the production deployment. Note that this comparison is treating "pure LLM application" as the baseline and RAG as an architectural augmentation rather than a replacement.

Dimension | Pure LLM | RAG |

Information source | Training data only | Training data + retrieved documents |

Knowledge cutoff | Fixed at training date | Live (whatever's in the knowledge base) |

Domain-specific accuracy | Generic, often wrong on specifics | Accurate when knowledge base is good |

Hallucination rate | Higher on specific facts | Lower (grounded in retrieved context) |

Setup complexity | Simple (just API calls) | Moderate (embedding + vector DB + retrieval logic) |

Cost per query | Lower (just LLM tokens) | Higher (embeddings, vector DB, more tokens) |

Latency | Lower (single LLM call) | Higher (retrieval step adds 100–500ms) |

Best for | General reasoning, creative tasks | Q&A on documents, customer support, research |

Example apps | Writing assistants, code generation | Customer support chatbots, document Q&A |

Maintenance | Updates with model upgrades | Requires keeping knowledge base fresh |

The llm rag vs pure-LLM comparison is revealing the real trade-off across the architecture decision in 2026. RAG is adding complexity and cost in exchange for accuracy on domain-specific information that the model was not trained on directly. The llm vs rag difference is mattering most when your application must be answering about specific data including your product documentation, customer records or current information. For applications that are needing general reasoning without domain specificity, pure LLM is simpler and faster across the build. The next section is providing a concrete decision framework to use.

When to Use RAG vs LLM — A Decision Framework

The rag vs llm choice is depending on five concrete factors that are mattering across most production deployments today. Apply each factor to your specific use case to determine which architecture is fitting best across the application. Most decisions are becoming obvious once these factors are evaluated individually rather than being judged in aggregate.

Does The Application Need Domain-Specific Knowledge?

If users are asking about your company's products, internal documentation, customer-specific information or any data the model was not trained on, RAG is required across the application. Pure LLM is hallucinating or refusing to answer such questions consistently. Customer support apps, internal knowledge tools and document Q&A systems are all needing RAG architecturally.

Does The Application Need Current Or Frequently Updated Information?

If accurate answers are requiring information newer than the model's training cutoff, typically 1+ year ago, RAG is essential across the build. News applications, financial data tools and inventory systems are all needing RAG because the LLM's training data is stale by the time queries are happening.

What Is The Acceptable Hallucination Rate?

Pure LLMs are hallucinating confidently on specific facts across consumer and enterprise applications. For applications where wrong answers are causing user harm including medical, legal and financial categories, RAG with proper source citation is reducing hallucination significantly. The llm vs rag choice is often coming down to whether hallucinations are tolerable across the user experience.

What Is The Latency Budget?

RAG is adding 100 to 500ms for retrieval before the LLM call across every query. Real-time conversational applications targeting sub-second responses may be favouring pure LLM with caching strategies layered on top. Applications where users are tolerating 2 to 5 second responses are easily accommodating RAG architecturally without user complaints.

What Is The Per-Query Cost Budget?

RAG is roughly doubling per-query cost across embedding the query, retrieving context and then larger LLM input tokens consumed. For high-volume applications, this difference is mattering significantly across the cost structure. For lower-volume professional applications, the accuracy gain is typically justifying the additional cost.

How RAG Augments LLM Capabilities | 5 Key Patterns

RAG is augmenting LLM capabilities in five distinct patterns across production deployments today. Most production RAG systems are using 2 to 3 patterns simultaneously rather than relying on a single approach across the build.

1. Knowledge Grounding

This is the most common RAG pattern across production deployments today. It is retrieving relevant documents from a knowledge base and passing them as context to the LLM during generation. The LLM is generating responses grounded in retrieved content rather than only training data across the application. It is used in customer support chatbots, internal documentation Q&A and enterprise search applications. The key components are including:

Embedding Model: OpenAI text-embedding-3-small, Cohere embed-v3 or open-source alternatives across the embedding layer.

Vector Database: Pinecone, Weaviate, Qdrant or pgvector for production deployments across the storage layer.

Retrieval Logic: Semantic search, hybrid search or reranking based on the query type being submitted by users.

2. Fresh Information Access

This pattern is solving the LLM training cutoff problem by retrieving current information at query time. It is used for news summarisation, financial analysis and any application requiring up-to-date information across the workflow. The knowledge base is updated continuously, daily, hourly or in real-time depending on the use case, rather than waiting for model retraining. The required components are including:

Continuous Ingestion Pipeline: Automated data syncing from source systems into the vector database across the platform.

Freshness Verification: Source timestamps in retrieved chunks so the LLM can be communicating recency to users transparently.

3. Source Citation And Verification

RAG is enabling LLMs to cite specific source documents for every claim being made in the response. Users can verify information directly rather than trusting model outputs blindly across the workflow. This is critical for medical, legal and financial applications where accuracy must be verifiable across the response. The required components are including:

Document Metadata Preservation: Source URLs, timestamps and titles attached to each chunk being stored in the vector database.

Citation Generation: Prompts that are instructing the LLM to cite sources inline with responses across every output.

Verification UI: Frontend elements that are linking citations back to source documents across the user interface.

4. Multi-Document Synthesis

This pattern is retrieving multiple relevant documents and synthesising information across them in a single response. Useful for research applications, competitive analysis and complex Q&A across enterprise use cases. The LLM is comparing, contrasting or combining information from multiple sources during the response generation step. The required components are including:

Multi-Vector Retrieval: Returning top-K results rather than a single best match across the retrieval step.

Reranking Logic: Filtering retrieved documents for relevance before synthesis is happening at the LLM layer.

5. Conversation Memory

RAG is retrieving prior conversation history alongside knowledge base documents to maintain context in long conversations across the customer journey. This is particularly valuable for customer support applications where users are returning to previous interactions. The LLM is accessing both factual knowledge and conversational context simultaneously across the response. The required components are including:

Conversation Storage: Database tracking user conversations with timestamps and metadata across the customer base.

Context Window Management: Retrieval logic that is prioritising recent versus older conversation context within the model's context window.

Performance, Cost, and Accuracy Trade-offs

The llm vs rag choice is producing measurable trade-offs across performance, cost and accuracy in production. Real numbers from production deployments are mattering more than theoretical comparisons across the architectural decision. The trade-offs below are reflecting typical production deployments at moderate scale of 10K to 1M queries per month.

Latency Impact: Pure LLM responses are averaging 1 to 3 seconds, while RAG is adding 100 to 500ms for retrieval, totalling 1.5 to 4 seconds end-to-end.

Cost Per Query: Pure LLM is costing $0.01 to $0.30 per query, while RAG is adding $0.001 to $0.01 for embedding plus increased token costs from longer context.

Accuracy On Domain-Specific Questions: Pure LLM accuracy is dropping to 30 to 60% on company-specific questions, while RAG is typically achieving 80 to 95% with quality knowledge bases.

Hallucination Rate: Pure LLM is hallucinating on 15 to 40% of specific factual questions, while RAG with source grounding is reducing this to 3 to 10% reliably.

Setup Complexity: Pure LLM is shipping in days across simple integrations, while production-grade RAG is taking 2 to 6 weeks to build and tune properly.

Maintenance Burden: Pure LLM is upgrading with model versions automatically, while RAG is requiring ongoing knowledge base curation, embedding refreshes and retrieval tuning.

Token Consumption: RAG queries are consuming 3 to 10x more LLM input tokens due to the retrieved context being included in every request.

The trade-offs are not binary across the architecture decision in 2026. Production teams are typically deploying both architectures across different application surfaces within the same product. Customer-facing Q&A is using RAG, while internal creative writing tools are using pure LLM consistently. Choosing based on the specific use case rather than dogmatic preference is producing better outcomes than universal architectural decisions across the company.

Real-World Examples | RAG vs Pure LLM Applications

Production applications are falling on either side of the RAG vs pure LLM line consistently. The examples below are illustrating which architecture each leading AI application is using and why that choice was made. The pattern is consistent across the category, domain specificity is determining the choice every time.

ChatGPT Plus (Default): Pure LLM for general queries, using RAG (Browse with Bing) when current information is needed across the conversation.

Claude Projects: RAG-based architecture retrieving from uploaded documents to answer questions about specific content the user shared.

Perplexity AI: RAG-based architecture that is searching the web and synthesising answers with citations across every query.

GitHub Copilot: Hybrid architecture using pure LLM for code generation with optional context retrieval from your repository across the workflow.

Customer Support Chatbots (Intercom Fin, Drift): RAG-based architecture retrieving from the company's help centre and documentation across customer questions.

Jasper / Copy.ai: Pure LLM architecture because creative writing is not needing domain-specific retrieval across the user workflow.

Notion AI: RAG-based architecture retrieving from your Notion workspace to answer questions about your documents and content.

Cursor IDE: Hybrid architecture using pure LLM for code suggestions and RAG for repository-aware answers across the developer workflow.

The pattern is clear across the production application landscape today. Applications where users are asking about specific external information are using RAG architecturally, while applications where users are wanting general reasoning, writing or creativity are using pure LLM. Most successful products are using both approaches based on context across different surfaces.

Limitations of RAG and LLM Approaches

Both approaches in the llm vs rag comparison are having real limitations across production deployments today. Honest understanding of where each is failing is preventing architectural mistakes that are surfacing only after production deployment is live.

Pure LLM limitations across production deployments today :

Hallucinations On Specific Facts: Models are confidently stating false information about specific people, companies or events across responses.

Knowledge Cutoff Staleness: Information from after the training date is missing entirely from the model's knowledge across queries.

No Source Verification: Users cannot verify where answers are coming from across the response generation workflow.

No Custom Domain Knowledge: Models cannot answer about your specific products, customers or documentation accurately across queries.

Limited Reasoning On Complex Facts: Multi-hop reasoning across specific facts is often failing in pure LLM deployments today.

RAG limitations across production deployments :

Retrieval Quality Determines Output Quality: Bad retrieval is producing bad responses regardless of model quality across the workflow.

Knowledge Base Curation Burden: Stale or incomplete knowledge bases are creating wrong answers across the customer experience.

Chunk Boundary Issues: Document chunking is splitting context unnaturally, causing missed connections across long documents.

Latency Penalty: Retrieval is adding 100 to 500ms to every query across the production workload.

Higher Operational Cost: Vector database, embedding API and increased LLM tokens are compounding costs across the lifecycle.

The most production failures are coming from underestimating RAG's curation burden across the lifecycle. Teams are building retrieval infrastructure then neglecting the ongoing knowledge base maintenance required for accurate answers across the customer base.

Hybrid Approaches — Combining RAG, LLM, and Fine-Tuning

Production AI applications are rarely using pure RAG or pure LLM exclusively across the architecture. The four hybrid patterns below are combining architectural approaches to capture benefits of each while mitigating individual weaknesses. Hybrid is the default for serious production deployments in 2026 across both consumer and enterprise AI products.

RAG Plus Fine-Tuning: Fine-tune the base LLM to better understand domain-specific terminology, then use RAG for current information across queries. Common in medical, legal and financial applications where models are needing both specialised vocabulary and access to current case data across responses.

Routing Between RAG And Pure LLM: A classification step is determining whether each query needs retrieval or general reasoning across the workflow. Customer support apps are routing product questions to RAG and general help questions to pure LLM. This is reducing cost while maintaining accuracy across the customer base.

Multi-Hop RAG With Agents: Agent loops are retrieving information progressively, refining queries based on initial results across the workflow. Used for complex research tasks where each retrieval step is informing the next query in the chain. It is producing deeper analysis than single-shot RAG but at higher latency and cost.

Caching Plus RAG: Cache common RAG responses to avoid recomputing for frequent queries across the workload. Particularly effective for customer support where many users are asking similar questions across the customer base. This is reducing per-query cost 60 to 80% while maintaining freshness for novel queries.

The pattern across successful production AI products is consistent across the industry today. Start with the architecture that is best fitting the dominant use case, then add hybrid patterns as the application is maturing and edge cases are emerging in production traffic.

Conclusion

The rag vs llm question is dissolving once you are understanding that RAG is augmenting rather than replacing LLM across the architecture. Pure LLM is winning for general reasoning, creativity and tasks not requiring specific information across the workflow. RAG is winning when accuracy on domain-specific or current information is mattering most to the user experience. Most production applications are combining both with routing logic across different surfaces. The right architectural decision is depending on five concrete factors covered earlier including domain specificity, freshness requirements, accuracy thresholds, latency budgets and per-query cost. For deeper reads, explore our LLM application development guide and the AI integration cluster posts.