If you work in financial services, you already feel the squeeze, the margins are thinner than they were five years ago and regulations keep stacking up. Customers want the same instant experience they get from their favorite consumer apps. And somewhere nearby, a startup or a forward-thinking competitor is quietly using AI to do what your team does, only faster, cheaper and with fewer errors.

That pressure is why AI in fintech has gone from a side experiment to a board-level priority. The market data backs it up: the global AI in fintech market sits at roughly $36.61 billion in 2026 and is headed toward $99.09 billion by 2031, expanding at a 22.04% CAGR. North America holds about 37% of that market today, but Asia-Pacific is growing fastest at over 33% CAGR, driven by China's heavy generative AI spending and widespread mobile payment adoption.

These are not just random vendor projections, this is happening right now. JPMorgan reported a 20% jump in sales between 2023 and 2024 and the bank credits its generative AI tools for much of that lift. They have since rolled out their GenAI toolkit to over 200,000 employees. Morgan Stanley uses OpenAI powered tools to support financial advisors. Goldman Sachs has teams using AI for IPO prospectus drafting and internal research. The biggest financial institutions in the world are not running pilots anymore. They are running production systems.

But here is what most articles about this topic leave out: the technology itself is not the hard part, the hard part is making AI work inside the messy reality of financial regulation, legacy infrastructure, data silos and customer trust. That takes thoughtful architecture, domain specific data pipelines, rigorous testing against regulatory standards and a user experience that builds confidence instead of confusion.

This guide is for those who are done with the hype and ready to build and we are covering what AI fintech software development actually looks like, the trends shaping 2026, a practical build process with seven steps and the use cases producing the best returns today.

What is AI fintech software development?

AI fintech software development means designing, building and deploying financial technology products where artificial intelligence and machine learning do real work, not just sit on top as a feature label. These are systems where algorithms make actual decisions: approving or declining a loan, flagging a suspicious transaction, rebalancing an investment portfolio, or filing a regulatory report.

The difference from traditional fintech development comes down to how decisions get made. In conventional software, you write fixed rules. If a transaction exceeds $10,000, flag it, if a credit score falls below 620, decline the application. The system only knows what you code into it. With machine learning in fintech, the system learns from patterns in historical and live data, it picks up on relationships human analysts might miss, like a specific combination of transaction speed, device fingerprint and geolocation that predicts fraud more reliably than any single threshold ever could.

AI powered banking software can underwrite loans in seconds rather than days, not because it cuts corners, but because it processes thousands of variables at once and weights them against models trained on millions of past outcomes. AI credit scoring software can evaluate borrowers with thin or missing credit histories by pulling in alternative data: utility payments, rental history, cash flow patterns and behavioral signals from digital banking activity. Zest AI has built its business around this exact idea and they report over 50% average annual customer growth by making lending accessible to people traditional scoring models would reject.

Development here also comes with problems you do not see in other software categories. Financial data is heavily regulated, so every model has to be explainable and auditable. A neural network that cannot tell a regulator why it declined someone's loan application is a liability, not a product. Data pipelines need to handle sensitive personal information under GDPR, CCPA and rules from bodies like the OCC and FCA. And the cost of failure is concrete: a bug in a recommendation engine is annoying, but a bug in a fraud model loses real money and destroys real trust.

This is where your choice of development partner matters. A specialized AI Development Company brings the domain expertise you need to navigate model governance, financial regulation and production ML infrastructure all at once. Generic software shops can build you a working app, but they tend to stumble at the intersection of these three.

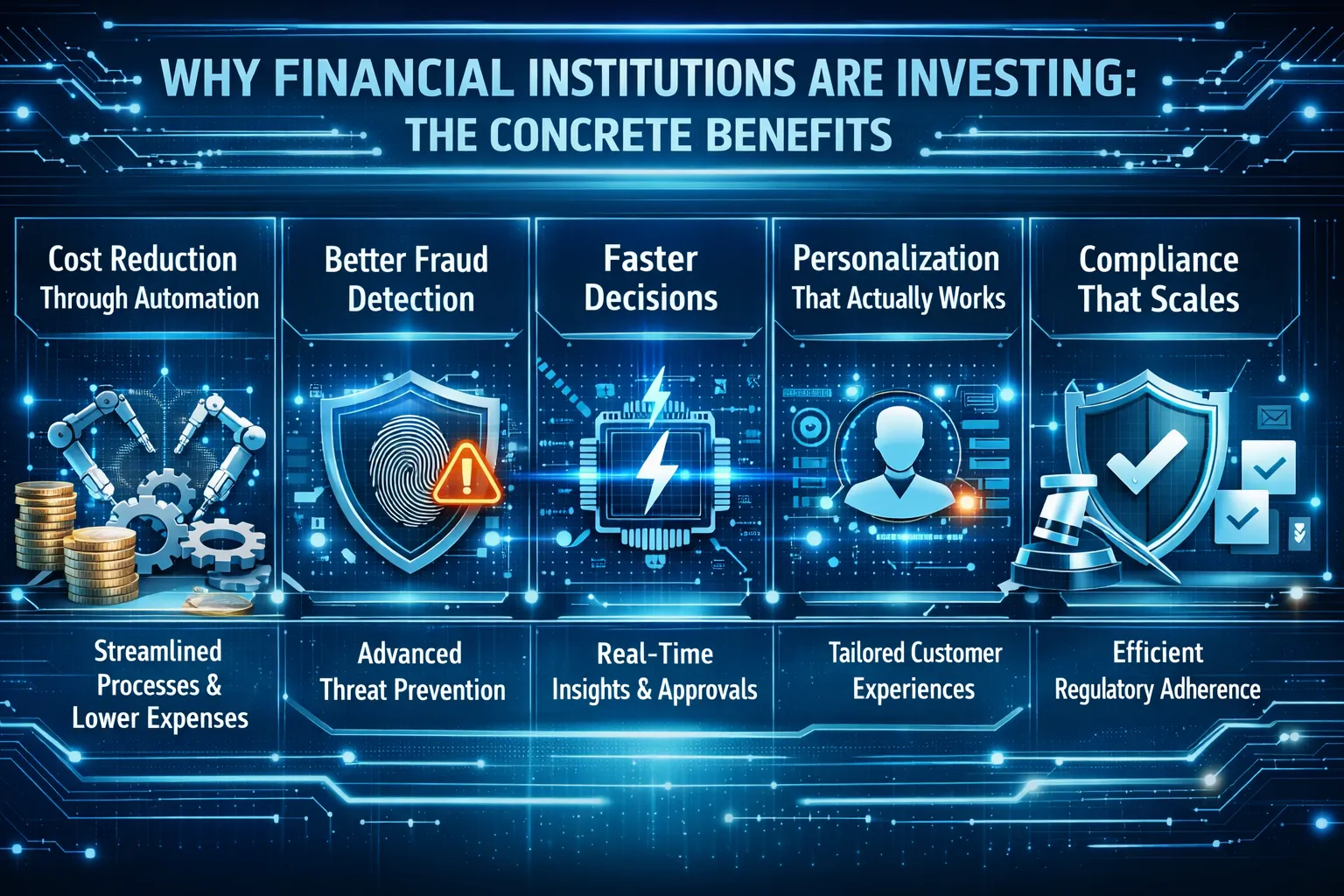

Why financial institutions are investing: the concrete benefits

The benefits of AI in fintech are not abstract, they are measured results that banks and fintech companies are reporting today.

Cost reduction through automation: Compliance reviews, document processing, customer service calls, fraud investigations: these eat up enormous budgets when done manually. AI handles the high volume, repetitive parts of these workflows so human teams can focus on judgment calls. Bank of America's AI assistant Erica has fielded over 2 billion customer interactions since launch. 98% of users got their answers without ever talking to a human agent. Across the industry, AI chatbots are projected to save banks over $80 billion in service costs.

Better fraud detection: Old rule based fraud systems throw up false alarms constantly while missing the more sophisticated attacks. ML models process hundreds of behavioral and transactional signals at once, adapting as fraud tactics change. Over 60% of financial institutions now use machine learning for real time fraud detection and some have cut fraud losses by up to $20 million per year. About 42% of card issuers have saved more than $5 million within two years of deploying AI for payment fraud prevention.

Faster decisions: AI compresses timelines that used to take days into seconds. Loan approvals that needed 48 hours of manual review now happen in under 10 minutes with AI powered underwriting. About 40% of loan approvals already incorporate AI analysis, cutting processing times by roughly 30%.

Personalization that actually works: AI lets financial institutions deliver individually tailored products and recommendations to millions of people at once. Banks that execute this well see a 25% bump in customer satisfaction and a 15% drop in operating costs. AI powered robo-advisors now manage roughly $1.9 trillion in assets globally and that number is heading toward $2.8 trillion.

Compliance that scales: Financial institutions deal with an average of 234 regulatory notices per day across jurisdictions. AI RegTech compliance software monitors, sorts and maps these changes against internal policies automatically. No human team can keep up with that volume manually without missing things.

AI fintech trends to watch in 2026

The AI in fintech space is moving fast and 2026 is the year when several technologies are graduating from pilot programs to production. If you are planning a build or updating your roadmap, these trends deserve your attention.

Generative AI going from experiments to daily operations

The real story in 2026 is not whether banks will adopt generative AI. It is how deeply they are weaving it into everyday work. JPMorgan's push to scale from 450 to over 1,000 AI use cases is a signal of where the industry is headed, not an exception. Morgan Stanley already uses OpenAI powered tools to support 16,000+ financial advisors. The shift is from AI as a standalone project to AI as something embedded across trading desks, compliance teams, customer support and back office processing at the same time.

What has changed is the level of control, banks are deploying retrieval-augmented generation (RAG) architectures that tie model outputs to verified internal data, which brings hallucination rates down to acceptable levels for regulated environments. Fine tuned models built on domain specific financial data are replacing generic off the shelf solutions.

Open banking rules creating new AI opportunities

Europe's PSD3 directive and similar frameworks elsewhere are reshaping how AI can be used. Mandatory data sharing rules now give AI systems consistent, permissioned access to bank records across institutions. That opens the door to real time credit scoring and personalized financial products that were not technically possible before. For mid-size banks, this levels the playing field because the same API infrastructure required for compliance also feeds ML models with data that used to be locked in silos.

This is driving demand for Fintech Software Development teams that understand modern AI infrastructure and the regulatory rules around data access.

Autonomous compliance agents

Compliance has always been labor heavy, in 2026, the more advanced financial institutions are deploying AI agents that continuously track regulatory changes, compare them to internal policies, flag gaps and draft remediation plans. AI RegTech compliance software is shifting from catching violations after they happen to predicting compliance risks before they materialize. The services segment of the AI fintech market is projected to grow at 27.95% CAGR through 2031, largely because banks need partners to build and manage these GenAI compliance pipelines.

Explainable AI becoming a regulatory must-have

Regulators around the world are pushing toward mandatory model explainability, especially for decisions that affect consumers directly, like credit approvals and insurance pricing. In 2026, this is not a best practice. It is becoming law. Development teams that do not design interpretability into their models from the start will hit walls during regulatory review. Techniques like SHAP values, LIME and attention visualization are now standard parts of the ML pipeline at most serious financial institutions.

Consortium fraud models

No single bank sees enough data to catch every fraud pattern on its own, the trend now is toward shared AI models where multiple banks and payment processors contribute anonymized data to a common fraud detection system. Federated learning and differential privacy make this work without exposing anyone's customer data. More than three out of five financial organizations already use advanced ML for real time fraud detection.

The talent gap keeps getting worse

Here is a trend that does not get enough attention: demand for AI professionals who also understand financial services exceeds supply by about four to one. This drives up salaries, delays projects and forces hard decisions about building internally versus hiring outside help. Organizations that cannot assemble internal AI teams fast enough are increasingly partnering with specialized firms, which turns partner selection into a strategic decision rather than a procurement checkbox.

How to build AI fintech software: a step by step process

Building AI fintech software is not a straight-line project, it is iterative, it requires people from multiple disciplines working together and it is shaped at every stage by whichever regulations apply. That said, successful teams tend to follow a consistent sequence and here is what that looks like.

Step 1: Define the problem and regulatory scope

"We want to use AI for fraud detection" is not a problem statement, it is a category. A real problem statement looks more like this: "We are losing $4.2 million annually to first party fraud on card-not-present transactions and our current rule based system has a 38% false positive rate that is driving customer churn."

At the same time, map out which regulations apply, if you are building AI credit scoring software you need to understand fair lending laws (ECOA, FHA in the US), adverse action notice requirements and the Fed's model risk management guidelines (SR 11-7). If you are building KYC AML automation software, BSA/AML requirements and FinCEN reporting obligations are your starting point. Treating this as something the compliance team will sort out later is the fastest way to build something that cannot ship.

Step 2: Audit and prepare your data

AI is only as good as the data it trains on and in financial services, data is usually scattered across legacy systems, inconsistently formatted and locked behind strict access controls. You need a thorough audit: what data exists, where it lives, how clean it is and what biases might be baked into the historical records. If past lending decisions were biased, a model trained on that data will reproduce those same biases.

Data preparation typically eats up 60-70% of total project effort in ML work. In fintech, that share tends to run even higher because of privacy and audit requirements.

Step 3: Pick the right technology stack

Your tech choices need to balance performance, scalability, security and regulatory compliance. That means decisions about cloud providers (AWS, GCP, Azure, each with different financial services certifications), ML frameworks (TensorFlow, PyTorch), data processing tools (Spark, Kafka for streaming) and model serving infrastructure. Hybrid setups that keep sensitive data on premises while using cloud for inference are growing at a 27.4% CAGR.

If you are not sure what the total investment looks like, it helps to study Software Development Cost structures early. AI projects carry extra cost layers: data engineering, model training compute and ongoing monitoring infrastructure on top of normal development expenses.

Step 4: Build and train your models

Choose modeling approaches that fit the problem: supervised learning for fraud classification, gradient boosted trees for credit scoring, NLP for document processing, reinforcement learning for dynamic pricing.

Three principles worth following here. First, start simple. A well tuned logistic regression model will often beat a poorly configured deep learning model and it is far easier to explain to a regulator. Second, measure what matters to the business, not just abstract accuracy. In fraud detection, precision and recall at specific thresholds matter because a missed fraud case costs very differently than a blocked legitimate transaction. Third, build for fairness from day one. Run disparate impact analyses across protected groups and document everything. Regulators will ask.

Bringing in an experienced AI/ML Development Company can speed this phase up considerably. With demand for AI talent in financial services outstripping supply by four to one, most teams, even large ones, benefit from outside expertise.

Step 5: Design the user experience

Models that people cannot use are models that do not matter. A fraud analyst does not just need a risk score. They need to see which signals drove that score so they can decide what to do next. A customer applying for credit needs a fast, clear experience, not a loading screen. Millennials and Gen Z make up about 79% of the fintech user base in high growth markets like Southeast Asia and they treat mobile first, instant interactions as the bare minimum.

Working with UI/UX design services that understand financial applications, where trust, clarity and regulatory disclosures all compete for screen space, pays off throughout the product lifecycle.

Step 6: Test rigorously before deployment

Testing AI fintech software goes well beyond standard QA. You need to validate model performance across different data slices, stress test edge cases, run adversarial tests (can someone game the model?) and run a full regulatory review. Shadow deployment, where you run the new model alongside your existing system and compare outputs, is proven for high stakes financial applications.

Step 7: Deploy, monitor and iterate

Shipping is the starting line, not the finish, AI models degrade over time as the real world data they encounter drifts away from their training data. In fintech, where market conditions, customer behavior and fraud tactics change constantly, continuous monitoring is not optional.

Set up automated alerts for performance drops, data quality problems and fairness drift. Establish retraining schedules, whether monthly, quarterly, or triggered by performance thresholds and keep a clear audit trail of every model version and how it performed. This ongoing maintenance is one of the most underestimated parts of AI in fintech and it is the main reason the services segment of this market is growing at nearly 28% CAGR.

Use cases of AI in fintech software

The benefits of AI in fintech are clearest when you look at specific problems where intelligent automation produces measurable results. Here are the applications generating the strongest returns, with named examples.

Fraud detection and prevention

Fraud is an arms race and rule based systems are on the losing side. Old fraud detection flags any transaction over $5,000, any purchase from a new device, any international transfer. These rules produce mountains of false alarms while missing the attacks that fall below their fixed thresholds.

ML models work differently, they analyze hundreds of signals at once: transaction size, merchant type, time of day, device fingerprint, behavioral patterns, network relationships. They assign risk scores in real time and adjust as new fraud tactics emerge.

What the numbers say: AI based fraud detection has cut financial losses by 40% at major platforms. About 42% of card issuers and 26% of acquirers report saving over $5 million within two years. Anomaly detection is used by 60% of financial firms. Brightwell Payments deployed an AI risk detection engine (ARDEN) specifically to protect cardholders from emerging digital fraud.

AI powered credit scoring and underwriting

Traditional credit scoring, the FICO model and its variants, works fine for people with established credit histories. It fails everyone else: young adults, recent immigrants, gig workers and anyone who has avoided traditional credit products. That is a massive market left on the table.

AI credit scoring software solves this by pulling in alternative data, including utility payments, rent, bank account cash flow and employment stability signals and running it through models that weigh thousands of variables to build a more accurate picture of creditworthiness.

What the numbers say: Zest AI reports over 50% average annual customer growth, with 175+ clients serving more than 110 million users collectively. The company expanded from credit unions into auto lending, community banking, home equity and small business finance. Around 40% of loan approvals now use AI analysis, compressing timelines while improving returns.

A good example of this in action is the credit repair case study, where AI helped consumers understand and improve their credit profiles through personalized recommendations.

KYC, AML and regulatory compliance automation

Compliance is one of the biggest cost centers in financial services and it keeps growing. Global spending on financial crime compliance alone exceeds $274 billion per year. Most of that goes toward manual work: analysts reviewing documents, checking sanctions lists, filing suspicious activity reports and responding to regulatory exams.

KYC AML automation software powered by AI cuts this burden in several ways. NLP extracts and validates information from identity documents and corporate filings. ML models score customer risk dynamically. Graph analytics map entity relationships to find hidden connections to sanctioned individuals or shell companies. And AI RegTech compliance software tracks regulatory changes across jurisdictions and flags updates that affect internal policies.

What the numbers say: Financial institutions see 234 regulatory notices per day on average. The RegTech services segment is growing at nearly 28% CAGR through 2031. Firms like ComplyAdvantage use AI and ML to screen transactions against global sanctions, PEP lists and adverse media in real time.

Algorithmic trading and market intelligence

Algorithmic trading uses ML models, including supervised learning, reinforcement learning and deep learning, to analyze structured market data alongside unstructured sources like news, earnings transcripts and social media. The goal is detecting patterns and executing trades at speeds no human trader can match.

What the numbers say: XTX Markets, a London-based algorithmic trading firm, uses ML to scan massive data sets and build statistical models. They broke into the foreign exchange market, a space that larger high frequency firms had not cracked and invested roughly $199 million in AI chips to maintain their edge. Beyond execution, portfolio managers now use NLP to analyze thousands of research reports and regulatory filings.

Personalized banking and wealth management

Customers expect their banks to know them. They want relevant product suggestions, proactive financial advice and experiences that feel personal rather than mass-produced. AI powered banking software makes this possible at scale and the demographic shift makes it urgent: millennials and Gen Z now make up the majority of fintech users in most growth markets.

What the numbers say: Robo-advisors manage roughly $1.9 trillion in assets globally, on track to reach $2.8 trillion soon. About 55% of robo-advisor users already trust algorithmic recommendations more than human advisors. The global robo-advisory market is expected to grow from $14 billion in 2026 to over $100 billion by 2034. Banks that nail personalization see a 25% improvement in customer satisfaction.

Intelligent customer service

AI customer service in fintech has moved well past the scripted chatbots that frustrate more than they help. Modern systems use large language models fine tuned on financial data to handle complex, multi-turn conversations: resolving disputes, walking through account activity, guiding product applications and handing off to a human when the situation calls for it.

What the numbers say: Bank of America's Erica has handled over 2 billion customer interactions, with 98% resolved without human help. The system has received over 50,000 updates to improve its natural language understanding. Across the industry, AI chatbots are expected to save banks $80 billion in service costs. First contact resolution in retail banking now exceeds 85%, backed by AI voice and virtual assistants.

Challenges you will face when building AI fintech software

No honest guide would skip the hard parts. Knowing what to expect makes the difference between planning for problems and being blindsided by them.

Data privacy and regulatory tangles: Financial data sits under some of the heaviest regulation of any data category. GDPR, CCPA, PSD2/PSD3, BSA/AML and rules from regulators like the OCC, FCA and MAS all apply, sometimes simultaneously. Consumer anxiety about AI in financial services runs high: surveys show concern levels above 94% in markets like Singapore, Australia and Canada.

Bias hiding in historical data: If your lending data reflects decades of biased human decisions, any model you train on that data will repeat and amplify those biases. Fixing this requires deliberate fairness testing and bias auditing before anything reaches production. This is not just an ethical issue. Fair lending laws make it a legal one.

Not enough people with the right skills: Demand for AI professionals who understand financial services outpaces supply by roughly four to one. This pushes up hiring costs, stretches timelines and forces hard build versus buy decisions.

Explainability versus accuracy tradeoffs: The most accurate models tend to be the hardest to explain. In financial services, where regulators increasingly require model interpretability, this creates real tension. Teams that do not design for explainability from the start end up trying to reverse engineer it later, which rarely works well.

Legacy system integration: Most banks run on technology built decades ago. Plugging modern AI infrastructure into legacy core banking systems, old data warehouses and batch processing platforms is a serious engineering challenge that gets underestimated in almost every project plan.

Wrapping up

With 92% of firms investing in AI and the market growing above 22% annually, the question is not whether to adopt but how fast and how well you can execute. The organizations seeing the strongest results, JPMorgan, Goldman Sachs, Morgan Stanley, Bank of America, share common traits: they defined clear problems, invested in data infrastructure, built teams with mixed expertise and committed to iterating continuously.

If you are evaluating partners, look for teams that combine deep AI and machine learning experience with genuine financial services knowledge. Look for people who talk about model governance and regulatory compliance as naturally as they talk about training pipelines. Working with a proven AI Development Company that gets the unique demands of financial services is not just convenient. Given the four to one talent gap, it may be a competitive requirement.

The benefits of AI in fintech are real. But they go to the teams that build with care, ship with discipline and treat AI as a long term capability rather than a one-time project.